AMD finally launches Ryzen AI Halo in 2026 to compete against Nvidia

AMD Ryzen AI Halo delivers 16 CPU cores and 32 threads for AI workloads

NEWS

Felipe Veríssimo

1/24/20262 min read

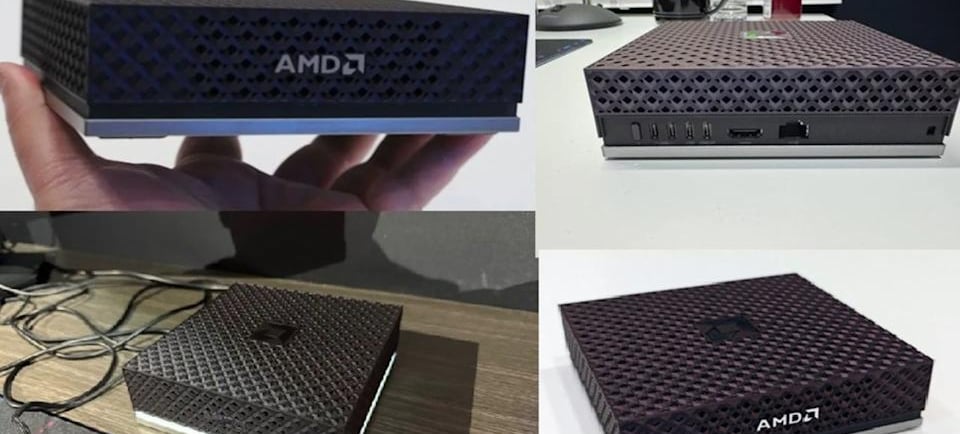

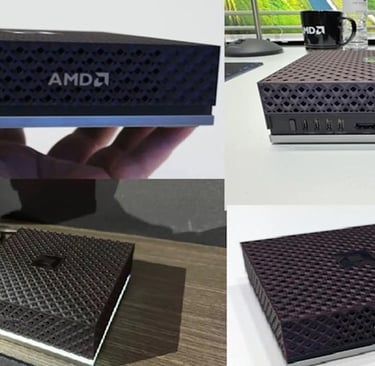

AMD Ryzen AI Halo is a mini workstation featuring the Strix Halo APU, designed to run generative AI, games, and heavy workloads locally, targeting developers and content creators who want to reduce dependency on cloud services.

What is Ryzen AI Halo

It's a compact "mini PC" system based on the Ryzen AI Max+/Strix Halo APU, combining CPU, integrated Radeon GPU, and NPU (Neural Processing Unit) in a single chip.

It was designed to compete directly with solutions like the Nvidia DGX Spark, offering a sort of "AI mini supercomputer" for office, studio, or home office use.

Architecture and specifications

The CPU delivers up to 16 Zen 5 cores and 32 threads, with boost around 5.1 GHz, bringing desktop-level performance in a compact form factor.

The integrated GPU is based on RDNA 3.5 (Radeon 8060S) with 40 Compute Units, capable of reaching dozens of TFLOPS in FP16, enough for 1080p gaming and accelerating AI models and rendering.

The NPU uses XDNA 2 architecture, dedicated to AI operations, adding around 50 TOPS and helping the system reach approximately 120–126 TOPS by combining CPU, GPU, and NPU.

The system supports up to 128 GB of unified high-bandwidth memory (shared between CPU, GPU, and NPU), which is crucial for running large models locally, such as LLMs with tens of billions of parameters in aggressive quantization.

Focus on local AI and development

Ryzen AI Halo was built for local AI: it offers ROCm support on Windows and Linux, allowing developers to run frameworks like PyTorch and other Radeon GPU-optimized stacks without sending sensitive data to the cloud.

This approach is interesting for companies and creators working with proprietary data, compliance needs, or low latency, such as internal chatbots, document analysis, real-time computer vision, or edge MLOps pipelines.

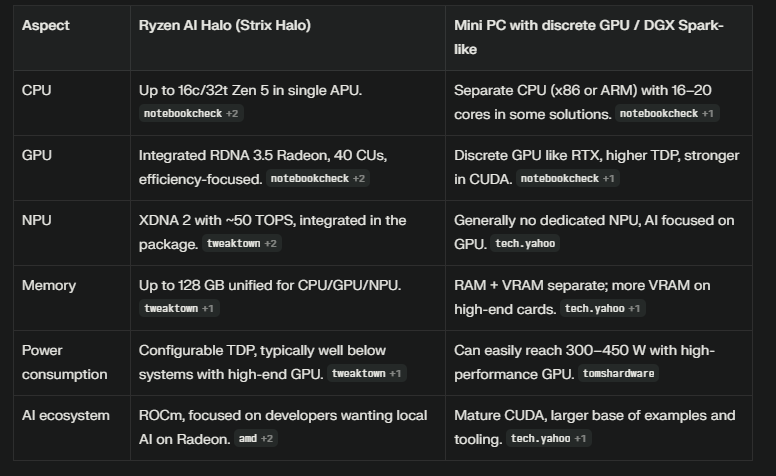

Comparison with traditional solutions

Who it makes sense for

AI developers who want to prototype, train, and run models locally without relying on cloud services 24/7.

Content creators who need a compact, quiet machine with enough power for editing, streaming, AI-powered content generation (image, video, text), and 1080p/1440p gaming.

Small teams and labs that need a "desktop AI server" with good energy efficiency and unified memory to experiment with LLMs, RAG, and data pipelines in a controlled environment.